AI Legal Writing Tools: Capabilities and Risks

Table of Contents

- Understanding AI Legal Writing Capabilities

- The Hallucination Crisis in Legal AI

- Landmark Hallucination Cases and Sanctions

- Court-Imposed AI Standing Orders

- ABA Formal Opinion 512 and Ethical Obligations

- Practical Applications and Best Practices

- Citation Verification Protocols

- Managing Confidentiality and Privilege Concerns

- The Bottom Line

- Understanding AI Legal Writing Capabilities

- The Hallucination Crisis in Legal AI

- Landmark Hallucination Cases and Sanctions

- Court-Imposed AI Standing Orders

- ABA Formal Opinion 512 and Ethical Obligations

- Practical Applications and Best Practices

- Citation Verification Protocols

- Managing Confidentiality and Privilege Concerns

- The Bottom Line

Introduction

AI legal writing tools have evolved into integral components of law firm operations, promising faster brief drafting, automated citation checking, and style consistency. Despite their appeal, risks are notable. A Stanford study found that GPT-4 hallucinated between 69% and 88% of the time on legal queries. Research indicates that Westlaw’s AI is accurate only 42% of the time, with a hallucination rate of 33%, while Lexis+ AI is accurate 65% of the time, with a hallucination rate of 17%. Courts have imposed sanctions on attorneys for AI-generated inaccuracies and have issued standing orders requiring AI verification. This guide explores the capabilities and limitations of AI legal tools and offers strategies to mitigate risks of sanctions or malpractice claims.

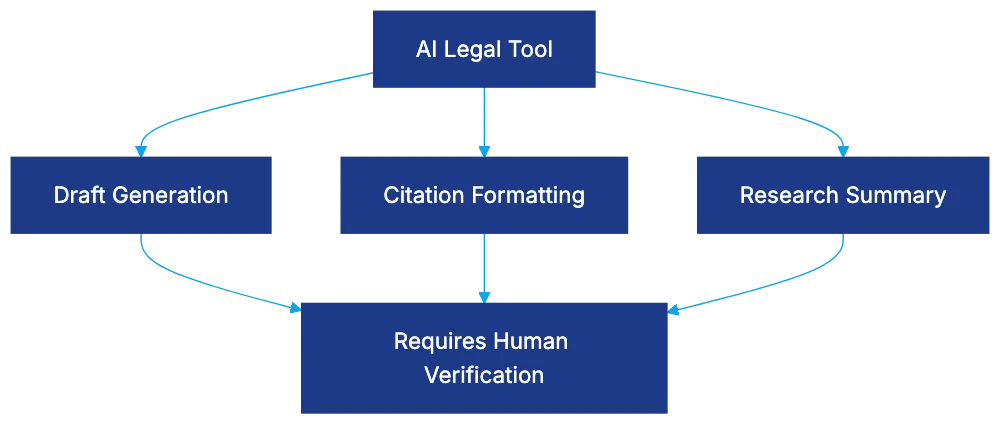

Understanding AI Legal Writing Capabilities

AI for legal documents offers practical functions with supervision:

- Brief writing assistance: Structures arguments and suggests improvements.

- Motion drafting tools: Create drafts from fact patterns and law, saving time.

- Legal memo generation: Summarizes research for internal review.

- Citation checking tools: Ensure formatting consistency and flag incomplete references.

These tools use language models trained on vast text, including court opinions. They predict words based on training data patterns, explaining their utility and unreliability. They recognize legal structures but often fail to identify real cases.

Style consistency is a major strength. AI maintains citation format, heading structure, and tone, crucial for paralegals handling large-scale litigation.

AI Legal Writing Tool Capabilities:

The Hallucination Crisis in Legal AI

The Stanford HAI study reports hallucination rates for AI legal writing programs. GPT-4 fabricated 49%-82% of content, and GPT-3.5 exceeded 88% on certain queries. Purpose-built legal AI, like Westlaw’s Research tool, hallucinated over 34% of queries, and Lexis+ AI showed a 17% rate.

Damien Charlotin documents 486 global cases of AI-generated fabrications as of late 2024, with two to three new cases daily, indicating acceleration of the issue despite cautions.

Landmark Hallucination Cases and Sanctions

AI Hallucination Risk Levels:

Mata v. Avianca: Attorney Steven Schwartz used ChatGPT to research law, filing six fabricated cases. Schwartz’s failure to verify led to sanctions and an apology order.

Noland v. Land of the Free: The first California appellate case imposing sanctions for AI hallucinations. Attorney Shaun Hakim faced a $10,000 sanction and was referred to the State Bar.

These incidents highlight overconfidence in AI accuracy with deadline pressures.

Court-Imposed AI Standing Orders

On May 30, 2023, Judge Brantley Starr issued an AI-specific standing order, requiring attorneys to certify AI verification. Similar orders followed from over 300 federal judges:

- Certification-required orders: Attorneys certify AI avoidance or verification.

- Disclosure-only orders: Attorneys identify AI-generated portions.

- Reminder orders: Reiterate Rule 11 obligations without new AI-specific rules.

Judicial practices vary, requiring detailed AI platform and verification disclosure. Orders impact legal staff, demanding thorough checks of compliance.

ABA Formal Opinion 512 and Ethical Obligations

ABA Formal Opinion 512 clarifies AI use in legal practice without creating new rules.

- Rule 3.3: Verify AI-generated citations to avoid false statements.

- Rule 1.1: Understand AI capabilities and limitations.

- Rule 5.3: AI tools require attorney supervision.

These rules emphasize mandatory verification of AI outputs.

Practical Applications and Best Practices

Effective uses of legal writing AI include:

- Drafting first drafts of routine motions: AI provides framework; human revision is essential.

- Verifying citation formats: AI ensures Bluebook consistency; separate case verification is crucial.

- Memo generation for research: Use AI for terms and doctrines; human research for completeness.

- Maintaining style consistency: AI flags inconsistencies, valuable in large-scale litigation.

AI struggles with niche legal issues due to limited training data.

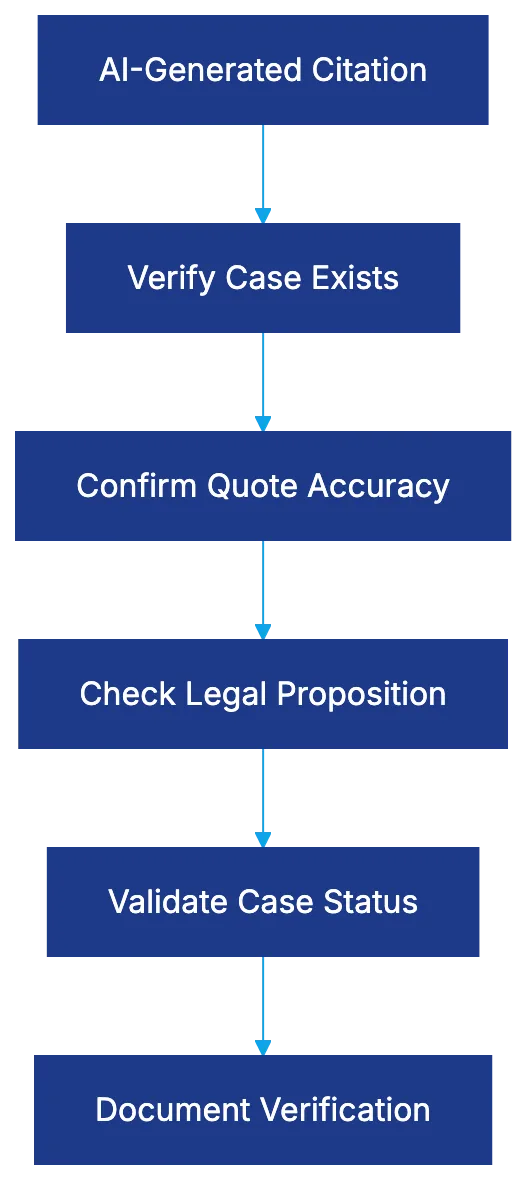

Citation Verification Protocols

Mandatory protocols are critical for AI use in legal documents:

- Confirm case existence through platforms like Westlaw or Lexis.

- Match AI quotes with actual text.

- Ensure cited propositions are supported.

- Use Shepard’s or KeyCite for case validity.

- Verify procedural details.

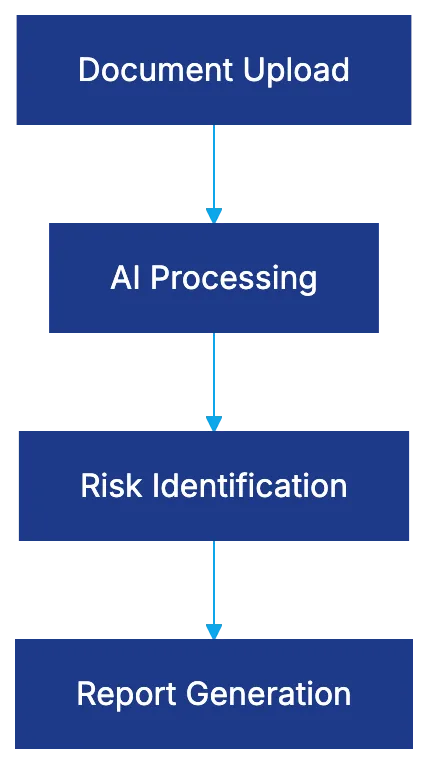

Citation Verification Workflow:

Document each verification step for accountability.

Managing Confidentiality and Privilege Concerns

AI raises privilege and confidentiality risks, with potential client data exposure to vendors. Understand vendor data policies, anonymize information, and consider local AI tool deployment. Negotiate vendor contracts to ensure data protection.

The Bottom Line

AI legal tools provide benefits for tasks with rigorous verification. They expedite drafts and enhance formatting, but high hallucination rates demand constant oversight. Court orders and ABA opinions clarify that AI requires comprehensive verification and responsibility.

Legal professionals must balance time savings against verification burdens for AI tools.

Frequently Asked Questions

What are AI legal writing tools, and how do they help in legal practice?

AI legal writing tools assist in creating drafts for legal documents, ensuring style consistency, and checking citations. They can help streamline the drafting process, saving time for attorneys while maintaining a structured framework for legal arguments.

What is the hallucination rate, and why is it a concern for legal professionals?

The hallucination rate refers to the frequency with which AI generates inaccurate or fabricated information. High rates, such as those found in studies of various AI tools, pose significant risks as legal professionals could unknowingly rely on erroneous content, leading to sanctions or malpractice claims.

What legal repercussions have attorneys faced due to AI-generated errors?

Attorneys have faced sanctions for relying on inaccurate AI outputs, as seen in cases like Mata v. Avianca and Noland v. Land of the Free. Both cases involved serious consequences for the attorneys, emphasizing the need for careful verification of AI-generated information.

What guidelines exist for using AI tools in legal writing?

Guidelines such as ABA Formal Opinion 512 clarify that attorneys must verify AI-generated citations, understand the tool's limitations, and supervise their use. These guidelines emphasize the necessity of maintaining accuracy and compliance within legal practices.

How can attorneys verify citations generated by AI tools?

Attorneys should verify AI-generated citations by confirming the existence of cases through reliable legal platforms like Westlaw or Lexis, matching quoted text with the actual sources, and checking case validity using services such as Shepard's or KeyCite.

What best practices should attorneys follow when using AI for drafting legal documents?

Best practices include using AI to draft routine motions or memos while ensuring human review and compliance with citation guidelines. Attorneys should document each verification step and maintain vigilant oversight to mitigate the risks associated with AI outputs.

How can attorneys manage confidentiality risks when using AI tools?

To manage confidentiality risks, attorneys should understand vendor data policies, anonymize sensitive client information, and negotiate contracts that ensure data protection. Careful examination of how data is handled by AI vendors is vital to maintaining client confidentiality.

Related Articles

E-Discovery Platform Selection Guide for 2026

Discover the top e-discovery platforms with AI, pricing insights, and TAR capabilities for efficient litigation management.

Top AI Tools Transforming M&A Due Diligence

Explore the leading AI tools shaping M&A due diligence, enhancing efficiency, accuracy, and security in document processing.

Robin AI: Transforming Legal AI with Human Verification

Discover how Robin AI combines AI and human expertise for accurate contract review, contrasting with traditional software solutions.